Machine Learning

Ongoing notes on machine learning and AI systems, including forecasting models, evaluation techniques, and practical workflows for financial and technical analysis.

AI will follow a much more logarithmic growth than the hype may lead you to believe

We've been perpetually '6 months away from AGI', yet as amazing the SOTA generative models are - we're no where near that.

I don't believe that the current generative ML architectures will be enough to deliver the promises even over the next decade. Generative models have their limits.

There's also a massive AI bubble in the U.S., wit the same entities over promising on results directly benefiting from public funds, which get counted as revenue many times over. Maybe this need a new term like, rerevenuezation (like rehypothecation with bonds) 😄

With that said, I don't think we're anywhere near the full benefits just from the tech we have available up to today. Agentic systems in particular.

"Random forest" is currently my favorite term from financial ML

... TREES MAKE FINANCIAL DECISIONS NOW?? 🌳😄

LLMs & AI Agents Didn't Replace Programming - They Evolved It

I've been programming with LLMs since 2022, implementing systems of varied complexity, both professionally and exploratory/hobby projects. And I will continue to do so in 2026. However, it's important to be aware of its limits.

Vibe/agent coding for critical systems doesn't work well (yet).

You'll also be disappointed if you're trying to do something with financial markets (e.g. Machine-Learning based models).

Vibe coded apps also frequently come with security vulnerabilities (for many apps this may be an acceptable compromise).

I don't think you'd be comfortable with driving a vehicle with all of its software vibe-coded, or with all code written by an agentic system, without thorough human investment.

LLMs are good at solving problems that have been solved many time before, but this abstraction quickly breaks apart if you delve with them into less developed topics, which frequent tend to be more complex. Pattern matching based prediction is very powerful, but you need enough patterns to learn from, alongside the correct context.

Additionally, vibe-coding a large codebase is one thing, while maintaining that same codebase is another.

Autopilot in commercial airplanes didn't deprecate carbon-based human pilots, but it did deprecate pilots who didn't commit to learning and integrating autopilot during the flight.

So is programming really dead, or has it merely evolved? 🤔

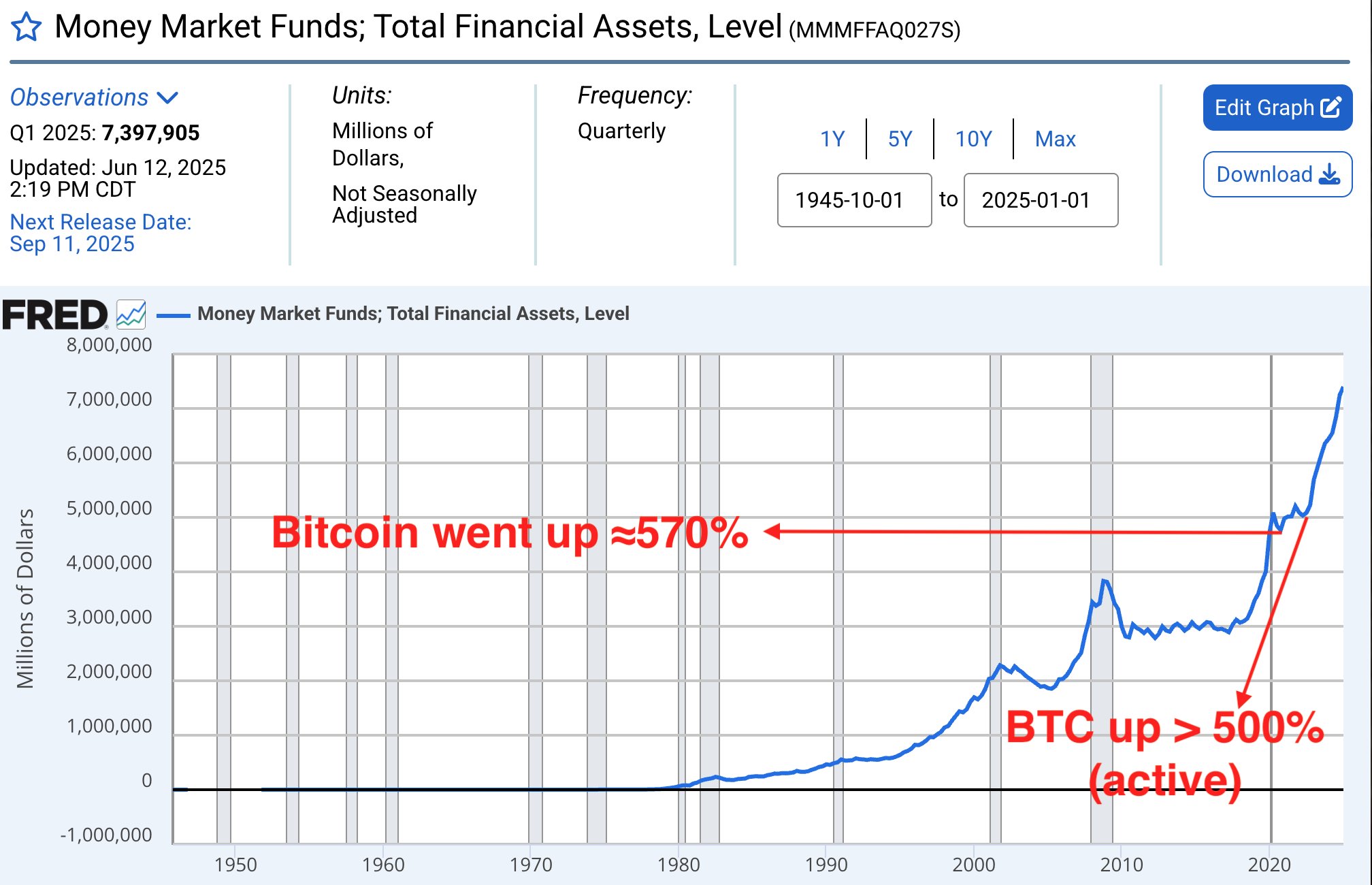

record $7 trillion USD in money market funds (mmf)

this risk-averse liquidity is bound to flow into into other financial assets at some point

mmf consists of short-term collateralized loans - credit that is NOT captured by M2

last two outflows coincided with bitcoin bullrun

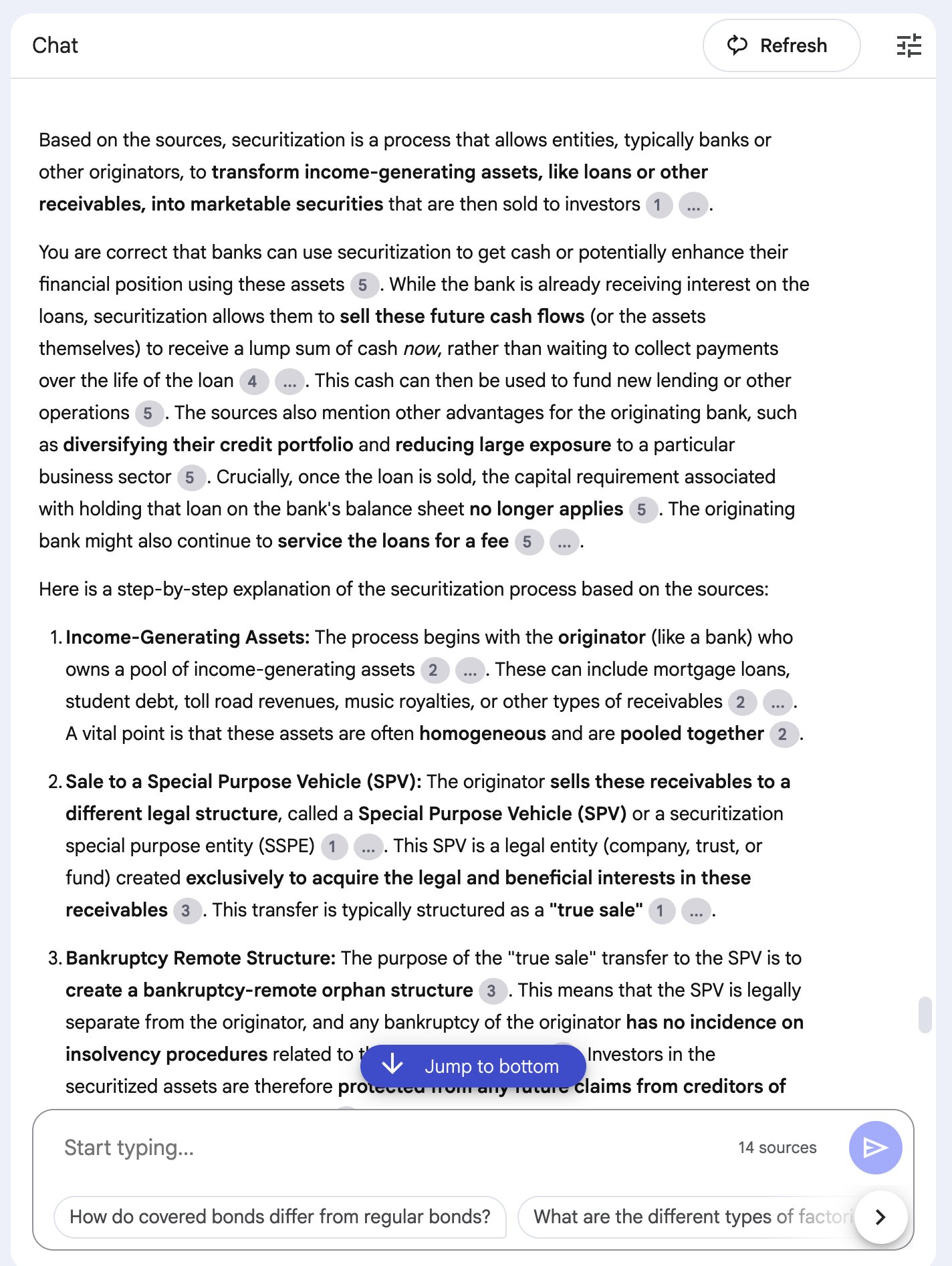

I've said this before, but I'm still amazed daily by how much LLMs have augmented the learning process

A much more efficient way of information retrieval.

Don't overthink the prompts - type or dictate your question, however unclear it is - neural networks will figure it out 🧠

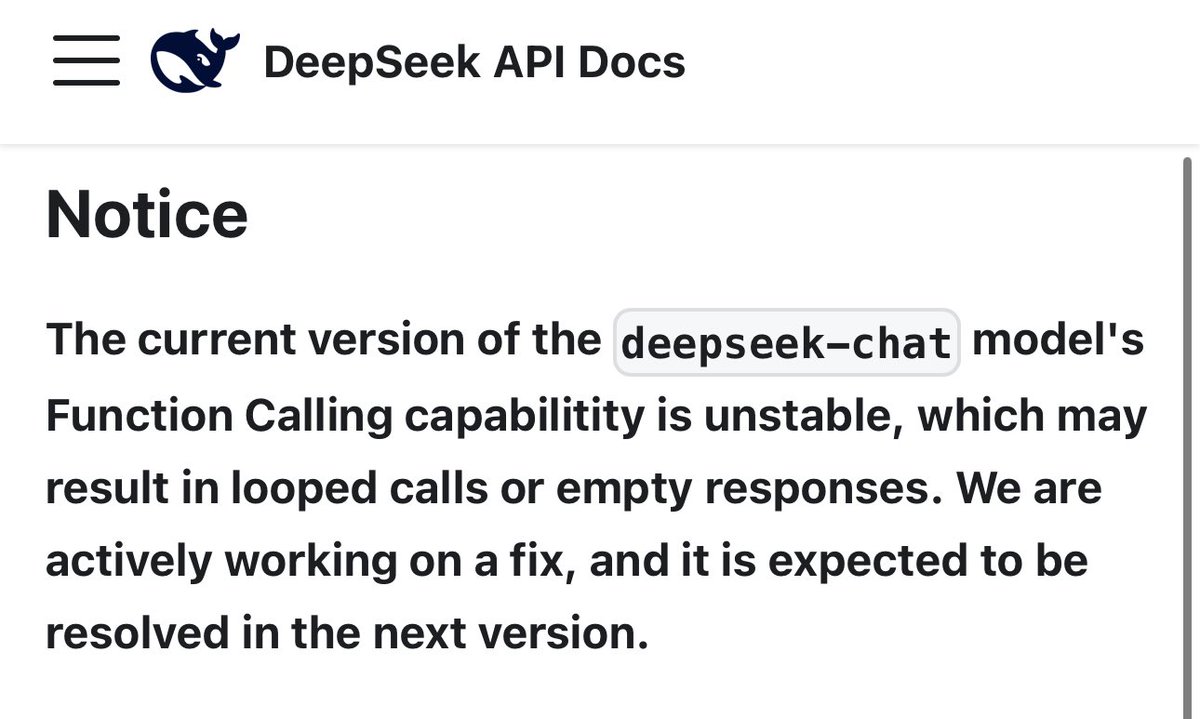

I'm working on an Agent and was debugging why the responses from the LLM come as null/empty

Turns out function calling is currently broken on DeepSeek ☹️

🤯 Think about all of those US market outflows due to DeepSeek, Qwen & Co.

$NVDA's & other AI-related stock crash wasn't just about cheaper AI-compute. The outflow also represents funds that may be potentially invested in the Chinese market

This point is often omitted 🤫 https://

Running LLM models locally really makes you appreciate the 'unlimited' bandwidth, which is a standard offering in most EU countries

GPT-4 outperforms both, carbon-based financial analysts (humans) and purpose-specific ANNs

While the former is understandable, the latter is surprising. The broader context of an LLM appears to be more important than the specialized multi-dimensionality of an ANN