Using DuckDB To Train A Neural Network On 500GB Of Price Data

Using DuckDB To Train A Neural Network On 500GB Of Price Data

I have ≈500GB of historical Bitcoin level 1 limit order book data to process and train a neural network on.

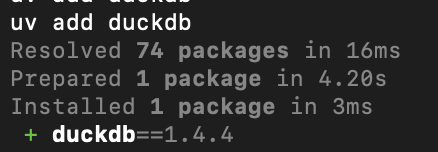

I don't want to overcomplicate the data access layer and definitely want to keep all of the training, validation and inference in Python. A simple file-based DuckDB setup sounds like a good solution for this, as it allows for iterative in-memory data loading within the model training code -- this is because DuckDB already implements all of those nice abstractions that allow it to load large datasets lazily/on-demand. So I'll neither need 500GB of RAM, nor a dedicated DBMS process.

The model will be trained using a walk-forward strategy, so several versions of neural network weights/trained models will be generated. This means the amount of data loaded into the memory will be limited in either case. I may rely on DuckDB for some algebraic processing when querying data, but for now I'm planning to mostly filter for events within a specific time range.

I used DuckDB several times before for finance applications, but not to actively process such a large amount of data, so I will report back if something goes wrong.